You've built a prototype that works beautifully in demos. Your LLM answers questions, generates summaries, and impresses stakeholders. Then you start planning production deployment, and a single question stops everything cold: "What happens when it hallucinates in front of a customer?"

This moment—when hallucination rates shift from an interesting technical curiosity to a business liability—is forcing a fundamental rethink of production AI architecture. With 57% of organizations now running AI agents in production, we've moved past the experimental phase. The challenge is no longer whether AI can work, but whether it can work reliably at scale.

The Architecture Paradigm Shift

Here's the uncomfortable truth: hallucinations aren't a bug in your implementation. They're a feature of how large language models are fundamentally trained. OpenAI's research reveals that next-token prediction objectives and standard leaderboards reward confident guessing over calibrated uncertainty. Models learn to bluff because that's what maximizes their training metrics.

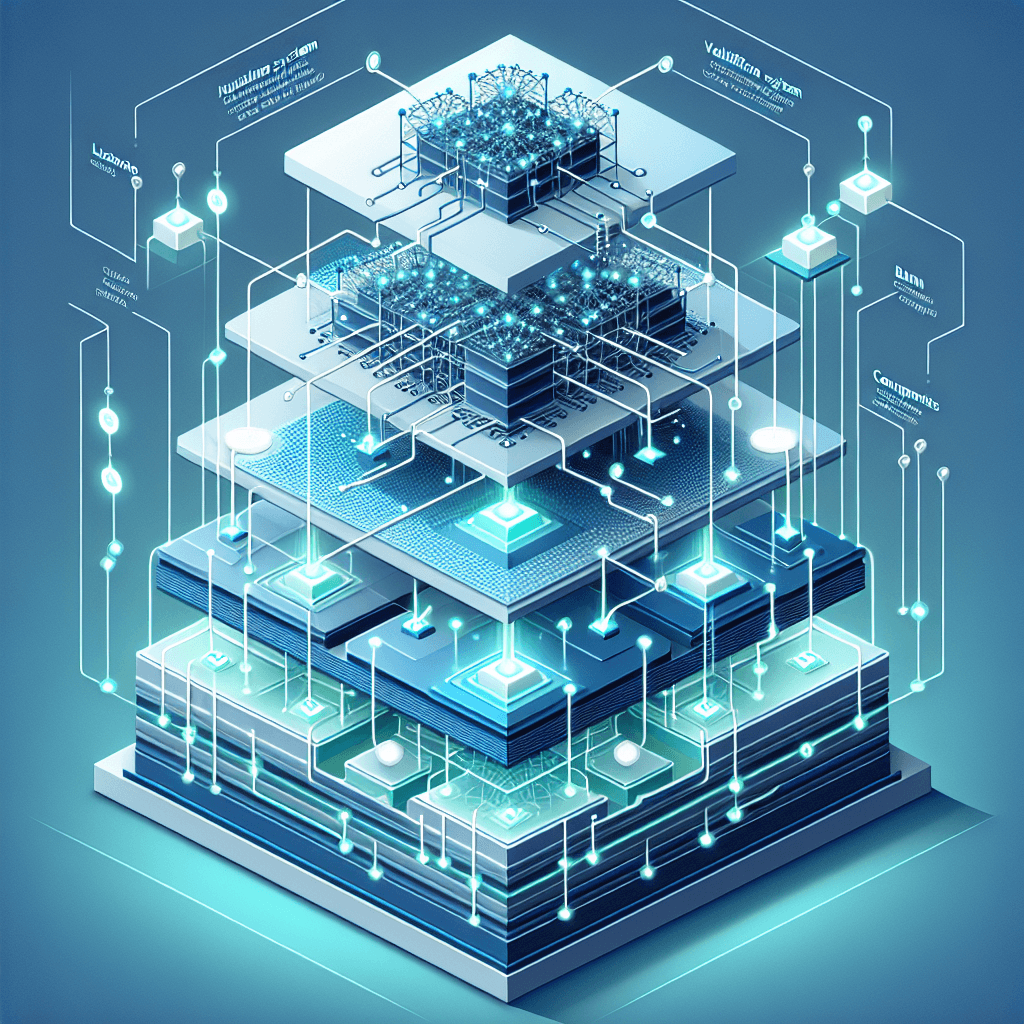

This realization demands an architectural response, not just better prompts. Production-grade systems are being redesigned around a core principle: hallucinations are an inherent characteristic that requires systematic mitigation, not elimination.

What does this look like in practice? Instead of expecting a single model to be perfectly accurate, production architectures now layer complementary controls:

- Provenance tracking: Every generated claim must attach to a traceable source or explicit uncertainty label

- Retrieval-augmented generation (RAG): Grounding responses in verifiable evidence sources rather than parametric memory alone

- Verification workflows: Automated and human-in-the-loop validation, particularly for high-stakes outputs

- Graceful abstention: Systems that explicitly say "I don't know" rather than fabricate confident-sounding nonsense

Why Single-Model Reliance Fails in Production

The gap between demo and production isn't just about scale—it's about accountability. In development, a 5% hallucination rate might seem acceptable. In production, that 5% represents real customer interactions, potential legal liability, and reputational damage.

Consider a financial services application providing investment guidance. A hallucinated stock recommendation isn't just inaccurate—it's potentially actionable fraud. A healthcare triage system that invents symptoms or contradicts medical records creates patient safety risks. These aren't edge cases; they're the reason 57% of organizations cite quality as their top barrier to AI deployment.

"Production-grade reliability requires architectural transparency where outputs include confidence scores, source attribution, and graceful abstention rather than fabrication when facing uncertainty."

This is why leading organizations are abandoning the "prompt engineering will fix it" approach. Mitigation isn't just prompt-side—it requires end-to-end design spanning data quality, retrieval systems, fine-tuning methodologies, and human oversight patterns.

The Three Pillars of Reliability Architecture

1. Structured Provenance and Confidence Scoring

Production systems need to expose not just answers, but the reasoning chain behind them. This means:

- Implementing citation mechanisms that link every factual claim to source documents

- Generating calibrated confidence scores rather than uniformly confident outputs

- Designing UX patterns that surface uncertainty to end users appropriately

When a model generates a response, the architecture should capture: What sources informed this? How certain is each claim? Where did the model extrapolate beyond available data?

2. Multi-Layer Retrieval and Verification

Properly implemented RAG patterns can reduce hallucinations by up to 80%, but "properly implemented" carries significant weight. Production-ready RAG requires:

- High-quality knowledge bases: Curated, versioned, and regularly validated source material

- Semantic retrieval: Moving beyond keyword matching to contextual relevance

- Evidence ranking: Prioritizing authoritative sources and flagging conflicting information

- Retrieval observability: Monitoring what documents were retrieved and how they influenced outputs

The architectural decision here isn't "RAG or not" but rather how to instrument RAG pipelines with sufficient observability to debug failures and measure ongoing reliability.

3. Training for Truthfulness, Not Just Capability

Standard training approaches optimize for helpfulness and capability, inadvertently teaching models to fabricate plausible-sounding answers rather than admit ignorance. Production architectures increasingly incorporate:

- Fine-tuning on domain-specific ground truth: Aligning models with verified facts in your specific vertical

- Refusal training: Explicitly rewarding models for abstaining when uncertain

- Adversarial testing: Systematically probing for hallucination patterns during evaluation

This isn't just an ML problem—it's an architectural one. Your deployment pipeline needs to support continuous evaluation, A/B testing of reliability interventions, and automated regression detection when hallucination rates drift.

The Economics of Reliability

These architectural changes come with costs: increased latency from retrieval and verification steps, higher computational overhead for confidence scoring, and human review workflows that slow throughput. But the economics shift dramatically when you consider failure costs.

A hallucination in a customer-facing system might require manual remediation, damage control, and potentially legal review. The cost of one significant failure often exceeds the infrastructure investment in prevention. This is particularly acute in regulated industries where AI outputs create audit trails and compliance obligations.

"Organizations are transitioning from single-model reliance to multi-layer systems that integrate observability platforms, verification workflows, and human-in-the-loop validation—particularly in high-stakes domains like finance and healthcare."

Making the Architectural Transition

If you're moving AI applications toward production, here are concrete architectural decisions to evaluate:

Start with observability: Before optimizing reliability, instrument your system to measure it. Track hallucination rates by category, monitor retrieval effectiveness, and capture user corrections as ground truth signals.

Layer defenses progressively: Don't try to implement perfect reliability on day one. Start with basic RAG, add confidence thresholding, then introduce human review for low-confidence outputs. Each layer compounds reliability improvements.

Design for graceful degradation: Build UX patterns that handle uncertainty explicitly. "Based on available information" hedges, confidence indicators, and explicit source citations turn hallucination risk into transparent limitations.

Invest in evaluation infrastructure: Production reliability requires continuous measurement. Build adversarial test suites, automated accuracy benchmarking, and feedback loops that surface failures quickly.

The Path Forward

The shift from experimental to production AI isn't just about scale—it's about accountability. As the industry matures, we're learning that reliability isn't a model problem; it's a systems problem. The most capable model with poor architectural support will underperform a less capable model wrapped in proper provenance, verification, and observability layers.

The organizations succeeding in production aren't waiting for perfect models. They're building architectures that acknowledge and mitigate inherent limitations while delivering real business value. They've accepted that the question isn't "How do we eliminate hallucinations?" but rather "How do we build systems reliable enough for our use case despite hallucinations?"

That architectural mindset—combining technical controls, process safeguards, and transparent limitations—is what separates demos from production-grade AI systems. The good news? We now have proven patterns for getting there. The challenge is committing to the architectural complexity that production reliability demands.

What reliability thresholds does your use case require? How would your architecture need to change to achieve them? Those questions, more than model capabilities, will determine your production AI success.