Remember when choosing a cloud provider felt like a marriage commitment? The AI landscape in 2024 and beyond is moving even faster, with new models dropping weekly and yesterday's state-of-the-art becoming tomorrow's baseline. Yet many teams are still architecting their AI systems as if they'll be using the same model forever—a decision that's increasingly looking like technical debt waiting to happen.

The good news? A quiet revolution is underway. Leading engineering teams are fundamentally rethinking how they build AI systems, and the shift isn't just about swapping out models—it's about designing for inevitable change.

The Real Cost of Model Monogamy

The pain of single-model lock-in manifests in ways that don't show up in your initial proof-of-concept. Your carefully crafted prompts become proprietary assets tied to one vendor's format. Your evaluation metrics get optimized for one model's quirks. Your cost structure becomes hostage to a single provider's pricing decisions.

But here's what makes 2026 different: the model landscape is no longer a slow march of incremental improvements. We're seeing specialized models emerge that excel at narrow tasks, open-source alternatives achieving commercial-grade performance, and pricing models that vary wildly across providers. The teams that win aren't those who picked the "right" model—they're the ones who built systems that can adapt when the landscape shifts.

According to recent research, 67% of organizations are actively working to avoid high dependency on a single AI technology provider. This isn't just risk management theater—it's a pragmatic response to a market that's proving its volatility quarter after quarter.

The Multi-Model Paradigm Shift

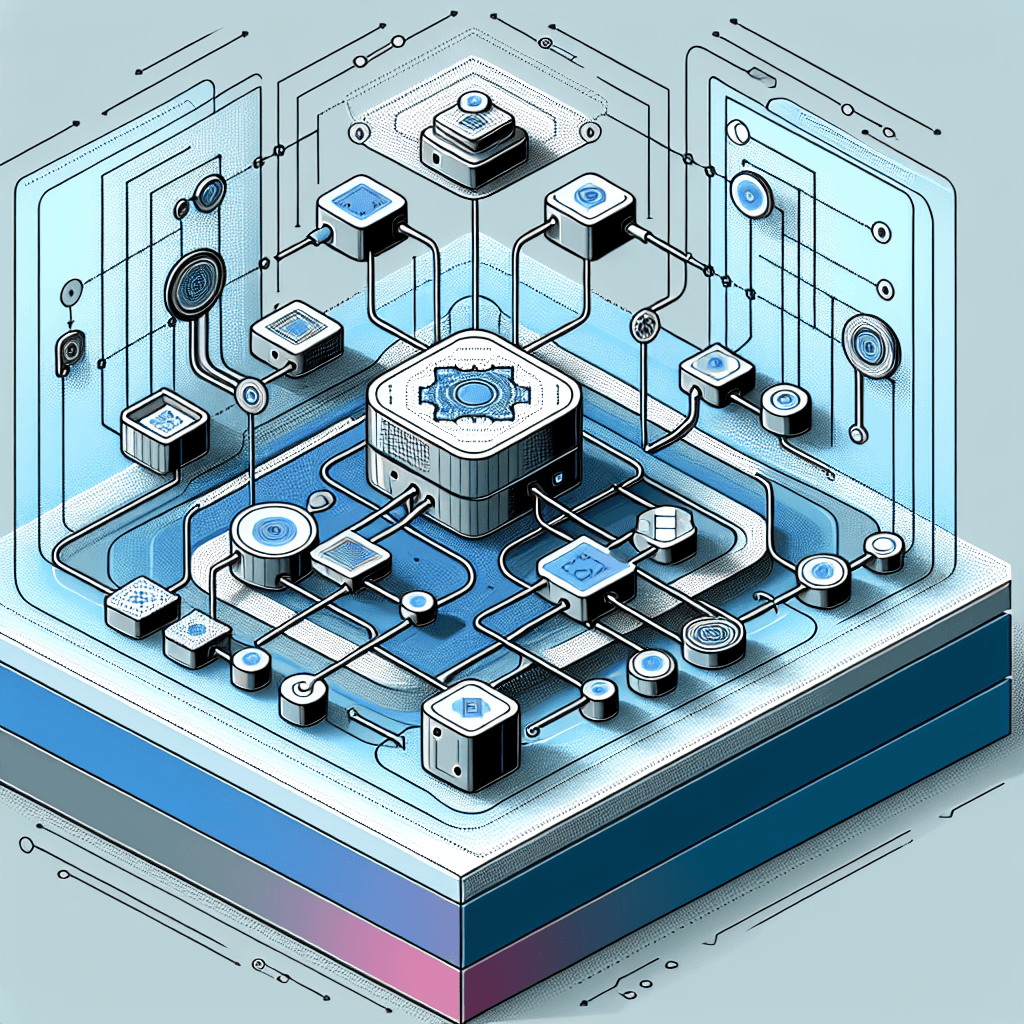

The most significant architectural shift happening right now is the move from "one model to rule them all" to intelligent task routing across multiple models. Document processing provides a perfect example: instead of forcing a single model to handle everything from table extraction to handwriting recognition, modern pipelines break documents into components and route each to the model that handles it best.

This approach delivers tangible benefits:

- Cost optimization: Use inexpensive models for classification and routing, reserving expensive frontier models only for complex generation tasks

- Performance gains: Leverage specialized models that excel at specific tasks rather than relying on general-purpose compromises

- Risk mitigation: When one provider has an outage or deprecates a model, your system degrades gracefully rather than failing completely

Leading teams are implementing this with frameworks like LangChain and LlamaIndex for orchestration, combined with tools like Anthropic's Model Context Protocol (MCP) for standardized context sharing across models.

The Abstraction Layer: Your Insurance Policy

If multi-model routing is the strategy, abstraction layers are the implementation that makes it practical. The most effective defense against vendor lock-in involves building a bridge between your application logic and the underlying LLMs.

Think of it as the same principle that made ORMs valuable for databases—you write to an interface, and the underlying implementation can change without rewriting your application. In the AI context, this means:

Unified API Interfaces

Platforms and internal abstractions that normalize inputs and outputs across providers. Your application code calls generateResponse(prompt, context) regardless of whether the underlying model is GPT-4, Claude, Gemini, or an open-source alternative. The abstraction layer handles provider-specific formatting, authentication, rate limiting, and error handling.

Provider-Agnostic Infrastructure

Deployment infrastructure matters as much as the API layer. Using vendor-agnostic tools like Kubernetes for orchestration and Terraform for infrastructure-as-code means your entire stack can migrate between clouds or on-premises environments. As one CTO put it: these tools "can be the difference between overnight collapse and graceful migration" when circumstances change.

Standardized Prompt Management

One of the sneakiest forms of lock-in is prompt engineering that only works with one model's specific format and behavior. Smart teams are building prompt management systems that separate the intent (what you want the model to do) from the implementation (how you phrase it for a specific model). This allows you to maintain a library of prompts that can be adapted across providers rather than rewritten from scratch.

Building for Change: Practical Implementation Patterns

The gap between understanding the need for flexibility and actually implementing it comes down to specific architectural decisions. Here's what the pattern looks like in practice:

Start with the Gateway Pattern

Gartner predicts that by 2028, 70% of organizations building multi-LLM applications will use AI gateway capabilities, up from less than 5% in 2024. An AI gateway sits between your application and model providers, handling routing, fallbacks, caching, and observability. This single component gives you the control point you need to swap providers without touching application code.

Implement Capability-Based Routing

Rather than hard-coding model names in your application, route based on capabilities: FAST_CLASSIFICATION, LONG_CONTEXT_ANALYSIS, CODE_GENERATION. Your routing layer maps these capabilities to specific models, and that mapping can evolve as the landscape changes. Today's FAST_CLASSIFICATION might use a small fine-tuned model; next quarter it might use a different provider's offering that's faster and cheaper.

Build Evaluation Into Your Architecture

You can't manage what you don't measure. Implement evaluation frameworks that test your outputs against expected results across different models. This allows you to confidently swap models when you can prove the new option performs as well or better on your specific use cases. Treating agents as systems and productivity as a function of reduced risk isn't just philosophy—it's the competitive advantage in 2026.

The Open Standards Advantage

Here's the critical insight that separates teams who successfully maintain flexibility from those who struggle: building open standards adoption into your AI architecture from the beginning is exponentially easier than retrofitting portability after committing to proprietary formats.

This means:

- Using OpenAPI specifications for your internal AI services

- Adopting standardized formats like OpenAI's completion format (which many providers now support) where possible

- Contributing to and building on open-source orchestration tools rather than proprietary platforms

- Storing prompts, evaluations, and fine-tuning data in provider-neutral formats

The goal isn't to avoid using vendor-specific features—it's to isolate them behind abstractions so you can make informed trade-offs between capability and portability.

A Model-Agnostic Strategy Builds Real Resilience

The promise of a model-agnostic architecture goes beyond just swapping one API for another when pricing changes. It creates a system that's resilient across multiple dimensions:

Operational resilience: When a provider has an outage, your system can failover to alternatives automatically. When a model is deprecated, you have time to migrate gracefully rather than scrambling.

Compliance flexibility: Different regions and industries have different requirements around data residency and model transparency. A flexible architecture lets you integrate solutions that meet local compliance needs without rebuilding your entire system.

Economic leverage: When you can credibly switch providers, you have negotiating power on pricing and terms. When you're locked in, you're subject to whatever changes the vendor decides to make.

Perhaps most importantly, it creates technical confidence—your team can experiment with new models and approaches because the architecture supports change rather than resisting it.

The Path Forward: Evolution Over Revolution

If you're looking at your current single-model architecture and feeling overwhelmed, remember that this is a journey, not a destination. You don't need to rebuild everything overnight.

Start with the next feature or service you build. Implement it behind an abstraction layer. Set up basic model routing for one use case. Build evaluation harnesses for your most critical prompts. Each step reduces your exposure and builds organizational muscle for managing multi-model systems.

The teams that will thrive in the rapidly evolving AI landscape aren't necessarily the ones using the most cutting-edge models today. They're the ones who built systems that speak in capabilities rather than vendor names, that route intelligently rather than statically, and that treat model flexibility as a first-class architectural requirement.

The end of single-model lock-in isn't just coming—it's already here. The only question is whether your architecture is ready for it.

What's your team's biggest challenge in building model-agnostic systems? Are you starting from scratch or retrofitting existing applications? The architectural decisions you make today will determine your flexibility tomorrow—choose wisely.