The Vision Model Paradox: More Choice, More Complexity

The vision AI landscape in 2026 is a testament to incredible progress, offering developers and enterprises a dizzying array of powerful models. From massive multimodal giants to lean, efficient models designed for the edge, the options are plentiful. Yet, this abundance creates a new challenge: selection paralysis. It's tempting to reach for the model with the highest benchmark score, but that's a recipe for technical debt and unmet expectations. Picking the right Vision Language Model requires understanding how your needs align with model strengths, not just relying on performance metrics.

The real cost of a misaligned model isn't just the API bill or compute time; it's the failed pilot project, the sluggish user experience, or the security vulnerability that could have been avoided. This guide cuts through the noise, providing a framework to evaluate vision models based on the practical constraints and objectives of your specific use case.

Core Decision Framework: The Four Pillars of Model Selection

Before comparing specific models, anchor your search in these four foundational pillars. They will immediately narrow your field of viable candidates.

1. Deployment Environment: Where Will It Run?

This is your first and most critical filter. A model's architecture dictates where it can live.

- Cloud/API: Ideal for batch processing, applications without strict latency requirements, or when you lack ML ops infrastructure. Proprietary models (e.g., GPT-4V, Claude 3) excel here with turnkey reliability.

- On-Device/Edge: Mandatory for real-time applications (robotics, AR), low/no connectivity environments, or when data privacy prohibits sending images externally. This domain is ruled by efficient open-source models like smaller variants of

Llava-NextorQwen-VL.

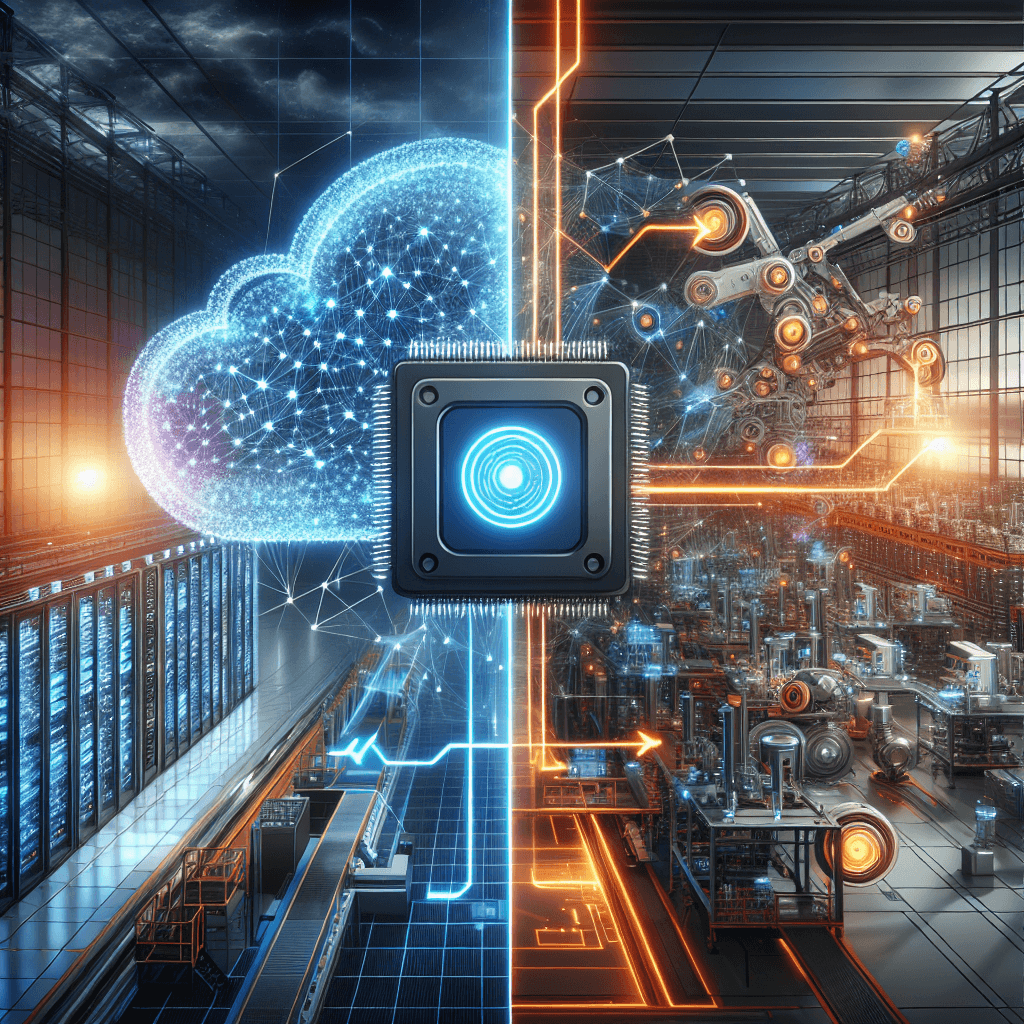

Top-tier VLMs should deploy to cloud, on-device, or edge environments while maintaining flexibility for costs, compliance, and runtime.

Actionable Takeaway: Define your latency budget and data sovereignty requirements first. If you need sub-100ms response or must keep data on-premise, your search starts and ends with models built for the edge.

2. Data & Task Specificity: Generalist or Specialist?

Are you building a general-purpose image Q&A tool or a system to detect microscopic cracks in composite materials? The answer dictates your path.

- General-Purpose VLMs: Great for broad scene understanding, descriptive captioning, or creative tasks. They work "out-of-the-box" but may lack precision on niche domains.

- Domain-Specific Needs: For medical imaging, industrial inspection, or scientific analysis, you'll likely need to fine-tune a model. Here, open-source models shine. Open-source models offer privacy advantages when run locally and are highly adaptable to fine-tuning on just a few hundred samples.

Actionable Takeaway: Audit your data. If you have unique, proprietary data—even a few hundred carefully labeled samples—leverage it. Most successful enterprises leverage unique proprietary data to create agents with precise skills rather than relying on general AI models.

3. Cost & Control: The Open-Source vs. Proprietary Balance

This is a strategic business decision as much as a technical one.

- Proprietary Models (Closed): Offer predictable, pay-as-you-go costs (until scale), minimal devops overhead, and consistent, high-quality outputs. They are the fastest path to integration. Proprietary models provide reliable, cost-effective access with minimal integration complexity.

- Open-Source Models: Shift costs from operational (API fees) to capital (engineering time, infrastructure). The payoff is control, privacy, and long-term cost savings at scale. In 2025, open-source VLMs reduced inference costs by up to 60% compared to closed models while achieving competitive benchmark scores.

4. Performance Reality: Benchmarks vs. Your Bench

Treat benchmarks with caution; they are important but not the only reference for choosing the right model for your use case. A model that aces the MMMU or VQAv2 benchmark might be woefully inefficient on your hardware or confused by your specific image format.

Actionable Takeaway: Create a small, representative evaluation dataset from your own domain. Test candidate models on your data, in your target environment. On-device performance may substantially differ from training environments; model comparisons should consider on-device performance.

Real-World Scenarios: Putting the Framework to Work

Scenario 1: The Manufacturing Defect Inspector

Need: Real-time visual inspection on the factory floor to identify surface defects. Data cannot leave the premises.

Framework Application:

1. Deployment: Must be on-edge (low latency, data privacy).

2. Task: Highly specific—requires fine-tuning on images of defects.

3. Cost/Control: Open-source is mandatory for on-prem deployment and fine-tuning.

4. Performance: Doesn't need 99.9% accuracy. Notably, perfect accuracy isn't always required for ROI; in industrial settings, a model with only 50% accuracy can save millions by identifying previously unnoticed defects.

Verdict: Choose a small, efficient open-source VLM (e.g., a quantized Phi-3-Vision), fine-tune it on several hundred defect images, and deploy it directly on the edge device.

Scenario 2: The E-Commerce Content Moderator

Need: Automatically scan millions of user-uploaded product images for inappropriate content and generate alt-text.

Framework Application:

1. Deployment: Cloud/batch processing is fine.

2. Task: General-purpose (object detection, scene understanding, captioning).

3. Cost/Control: Need reliability and scale without building a large ML team. Predictable costs are key.

4. Performance: High recall on policy violations is critical.

Verdict: A proprietary model API is the best fit here. The integration is simple, performance is robust out-of-the-box, and costs scale directly with usage.

Conclusion: From Model Selection to Strategic Advantage

Choosing a vision model in 2026 is less about finding a silver bullet and more about strategic alignment. It's an exercise in understanding your own constraints—environmental, financial, and technical—and matching them to a model's inherent strengths. The most forward-thinking teams are already moving beyond using off-the-shelf models as-is. They are using capable open-source foundations as a starting point, infusing them with their unique proprietary data to build AI agents that possess precise, competitive skills unavailable to anyone else.

Your call to action is this: Before you look at another benchmark table, gather your stakeholders and whiteboard the answers to the four pillars. Define where the model must run, what specific task it must perform, what balance of cost and control you can manage, and how you will validate its performance in the real world. This process will not only lead you to a better technical choice but will also align your AI initiative with tangible business outcomes from day one.